WEBLOG

Previous Month | RSS/XML | Current | Next Month

June 30th, 2013 (Permalink)

Headline

Hospitals Are Sued By 7 Foot Doctors

Did they keep hitting their heads?

Source: Robert Goralski, Press Follies: A Collection of Classic Blunders, Boners, Goofs, Gaffes, Pomposities, and Pretentions from the World of Journalism (1983)

June 29th, 2013 (Permalink)

A Literacy Puzzle

Spell backwards, forwards.

June 19th, 2013 (Permalink)

The Million Straw Man March

The first principle is that you must not fool yourself―and you are the easiest person to fool.―Richard Feynman, "Cargo Cult Science"

I suppose that it would be too much to expect of a book titled The Science Delusion that it be well-argued, or that it should attempt to appeal to the reader's reason instead of emotion. Judging by Mark O'Connell's review in Slate of Curtis White's new book (which I linked to in Resource 2, below), it appears to be a diatribe attacking science and even reason itself. Now, I've been burned once before by a misleading review in Slate attacking a book for supposedly being full of fallacious and anti-science arguments (see Resource 1, below), but I haven't read this book yet so I can't judge for myself. So, keep in mind that what I say below is based on the review. O'Connell certainly makes it sound like the kind of book that would fit right on The Fallacy Files' Shelf of Shame between Hitler: Neither Vegetarian nor Animal Lover and The Secret:

[White']s anger can be rhetorically persuasive, particularly when he’s taking on sci-tech evangelists for ignoring the extent to which post-Enlightenment rationality has been responsible for at least as much human suffering as religion. “In spite of its obsession with Jews,” he writes, “the horror of Nazism was not a religious nightmare; it was a nightmare of administrative efficiency.”

We've seen this kind of argument before: Paul Johnson was falsely accused of using it in his short biography of Darwin, but there's no doubt that some religious conservatives who reject the theory of evolution have attempted to blame it for Nazism (see the second "Update" to Resource 1, below). However, White appears to be generalizing the blame to science as a whole or to reason itself and, from what I can tell from the review, he's no religious conservative―O'Connell says specifically that he's a "non-believer".

Where do White―or O'Connell for that matter, who seems to agree with White on this point―get the notion that Nazism, or even "administrative efficiency", was some kind of manifestation of "post-Enlightenment rationality"? Given that Nazism was, in fact, an irrational creed based on pseudoscientific racism and a conspiracy theory, it's almost by definition not a manifestation of post-Enlightenment or any other kind of rationality. "You keep using that word. I do not think it means what you think it means."

That said, even if one could make a case that science or rationality led to Nazism, it's a type of appeal to consequences to argue that therefore science or rationality are incorrect. The moral to draw from the example of Nazism is not that science or rationality are as bad as religion, but that fanaticism is a bad thing in any form.

While the Hitler Card seems to slip by O'Connell, he does catch a couple of other fallacies:

It isn’t that his targets aren’t richly deserving of his wrath; it’s that it’s so often channeled into puzzlingly irrelevant ad hominem attacks and hastily constructed straw men. White has it in for the theoretical physicist and Nobel laureate Richard Feynman…. [White] plunges us down the off-ramp and starts zipping along the low road at a ferocious clip: “I hope you will agree that this is a very disappointing conclusion for someone who was almost as famous for playing the bongos and going to strip clubs as he was for physics.” The next time Feynman appears, he’s introduced as “bongo man Feynman.” Maybe I’m missing something here, but it’s not apparent to me how a person’s enthusiasm for bongos and strip clubs has any bearing on whether his scientific ideas are worth taking seriously. This is about as clear a case as I’ve ever encountered of playing the man rather than the ball. And it’s frustrating not because you feel Feynman is being unfairly traduced but because this stuff is just not worth talking about in this context; it’s a pointless diversion, and it erodes your faith in White as a navigator of the territory. A more serious problem is a tendency to oversimplify and caricature the views he’s engaging with. …[T]he book is so filled with reductive imaginings of ideas that are already sufficiently weak in their actual form that the whole enterprise seems in danger of becoming a Million Straw Man March on the citadel of scientism.

Of course, you might think that if you're really anti-reason, then it makes sense to write a whole book consisting of nothing but fallacious arguments. However, if you think "rationality" is the problem, then why argue at all? Why not just bash anyone who disagrees with you over the head with a club? In fact, I think I'd rather be hit on the head with a club than have to read this book! (There's a blurb for you.) Though one good thing you can say for fallacious arguments is that they at least give lip service to arguing: as counterfeits of reasoning they confirm the value of real reasoning, just as counterfeit money depends on the value of genuine money. Accept no wooden nickels!

Source: Richard Feynman, "Cargo Cult Science" (1974). This is a good time to read, or reread, Feynman's classic lecture on what he called "cargo cult science". Feynman was no naive enthusiast for anything claimed to be "science", as White seems to think. White's book might well be classified as "cargo cult philosophy": it has the form of philosophy―arguments, for instance―but the planes don't land.

Resources:

- New Book: Darwin, 11/17/2012

- Blurb Watch: The Science Delusion, 6/16/2013

Fallacies:

Update (7/12/2013): Curtis White, author of The Science Delusion, has written a response to O'Connell's Slate review, also published in Slate (see the Source, below). Of course, White deserves a chance to respond, and it's good of Slate to give him a soapbox to reply to its review, but there's not much to it. But don't take my word for it, read it yourself―there's not much to it!

I still haven't read the book, and this short article doesn't make me any more inclined to do so than I was before; if anything, less so. White's main claim is that he was kidding with the straw man arguments and ad hominem attacks. It's good to hear that he wasn't serious, but it doesn't increase my interest in the book. What's worse, however, is that in the course of trying to defend himself against accusations he commits some additional howlers. For instance:

I confess I have been surprised by several of the reviews of my new book, The Science Delusion. I have been described as an angry…critic of straw men and perpetrator of ad hominem attacks. Putting aside the possibility that these characterizations are themselves ad hominem attacks….

This is a weaselly way of trying to accuse his critics of engaging in ad hominem attacks against him without committing himself to it. He says he's "putting aside" that "possibility", but by saying so he's actually raising the possibility in his readers' minds. If he really wanted to put the issue aside, he wouldn't have mentioned it; rather, he's trying to have his cake and eat it too. If he really thinks O'Connell's criticisms were ad hominem, he should have had the courage to say so instead of insinuating it. O'Connell at least had the honesty to come right out and accuse White of ad hominems.

More importantly, by raising this claim, White shows that he doesn't understand what an ad hominem is. If accusing a writer of ad hominems were itself one, then it would never be possible to accuse anyone of committing such a fallacy without committing the fallacy oneself. That, of course, is absurd, but even more absurd is the fact that White's own insinuation would be an ad hominem: his own argument self-destructs! Perhaps that's why he couldn't commit himself to it.

What’s a straw man? A straw man is the misrepresentation of an argument so that it is easier to attack. For example, when Richard Dawkins makes his case against religion by reducing the whole enterprise to evangelical Bible thumpers and the Taliban. … What’s an ad hominem attack? It is the lowest form of critique in which the human is attacked rather than the argument. For example, when Richard Dawkins calls Michel Foucault a “francophony.”

So, his defense is: "Richard Dawkins does it, too!" That's apparently supposed to make it alright; otherwise, why mention it? This is the tu quoque fallacy, which is a type of ad hominem (see the Fallacy, below, for an explanation―more accurately, it's tu quoque by proxy, see the Resource, below). So, White stands convicted of committing a type of ad hominem in the course of trying to defend himself against the accusation of committing ad hominems. Despite the fact that I haven't read White's book, I find O'Connell's criticisms more convincing now: sometimes the defendant shouldn't take the stand in his own defense.

Source: Curtis White, "Ode to a Straw Man", Slate, 7/12/2013

Resource: Q&A, 6/17/2010

Fallacy: Tu Quoque

June 16th, 2013 (Permalink)

Blurb Watch: The Science Delusion

For a change, let's look at a blurb for a book rather than a movie. I make this suggestion because an article in Slate (see the Source, below) just drew my attention to the new book The Science Delusion, by Curtis White―about which I'll have more to say later. To my surprise, the Kindle edition of the book has the following blurb on its "cover": "Splendidly cranky."―MOLLY IVINS. I was surprised because I seemed to recall that Ivins died some years ago. Are publishers now using mediums to get blurbs for their latest books?

In fact, Ivins died early in 2007. Has the book been in the works that long? It seems unlikely, as the copyright and publication dates on the Kindle edition are both this year. Instead, a web search reveals that Ivins is quoted on the author's earlier book, The Middle Mind: "A splendidly cranky academic." Presumably, this is a description of the book's author rather than the book, and the publisher just dropped the first and last words to make it sound as though Ivins was referring to the new book. Perhaps some people who are not aware of her death will purchase the book under the impression that she thought it "splendidly cranky", though I'm not so sure that crankiness is really a good quality for a book even if splendid.

This certainly opens up new possibilities for posthumous blurbing. Why stop with Ivins when there are so many other dead critics you could quote reviewing some other book than the one you need a blurb for?

Source: Mark O'Connell, "The Case Against Reason", Slate, 6/7/2013

June 12th, 2013 (Permalink)

Wedding Bill Blues

From a Slate article by Will Oremus:

Weddings are expensive. There’s no way around it. … Just how expensive are they? …[M]y fiancée and I did what most couples do: We asked Google how much the typical wedding costs. The answer from all quarters―wedding sites, credible news outlets, the New York Post―is remarkably consistent, precise, and definitive. It is also grossly misleading, and almost certainly wrong. “Average wedding cost $28,400 last year,” reports CNN Money. “Average U.S. wedding costs $27,000!!” enthuses the New York Daily News. “Average cost of U.S. wedding hits $27,021,” declares Reuters, which should know better.

Regular readers of The Fallacy Files should be able to figure out one big problem with these figures without reading the rest of the article. If it isn't obvious to you, however, stay tuned.

…[A] problem with the average wedding cost is right there in the phrase itself: the word “average.” You calculate an average, also known as a mean, by adding up all the figures in your sample and dividing by the number of respondents. So if you have 99 couples who spend $10,000 apiece, and just one ultra-wealthy couple splashes $1 million on a lavish Big Sur affair, your average wedding cost is almost $20,000―even though virtually everyone spent far less than that. What you want, if you’re trying to get an idea of what the typical couple spends, is not the average but the median. That’s the amount spent by the couple that’s right smack in the middle of all couples in terms of its spending. In the example above, the median is $10,000―a much better yardstick for any normal couple trying to figure out what they might need to spend. Apologies to those for whom this is basic knowledge, but the distinction apparently eludes not only the media but some of the people responsible for the surveys. I asked Rebecca Dolgin, editor in chief of TheKnot.com, via email why the Real Weddings Study publishes the average cost but never the median. …“If the average cost in a given area is, let’s say, $35,000, that’s just it―an average. Half of couples spend less than the average and half spend more.” No, no, no. Half of couples spend less than the median and half spend more.

Actually, the situation is a bit worse than Oremus suggests, since "average" is ambiguous, with both the mean and median as possible meanings, though the most common meaning is probably the former, as it is here. As long as you're dealing with a normal distribution―that is, it forms the famous "bell curve" when graphed―the mean and median will be very close together, so that it doesn't matter much which you use. However, statistics involving money, such as incomes or how much people spend on weddings, are seldom normally distributed. Instead of forming a bell curve, such distributions will usually look more like a childrens' playground slide: a steep ascent followed by a gradual falling off. A few very high incomes, or very expensive weddings, will pull the mean higher than the median. For such skewed statistics, the median will give a better idea of a typical member of the distribution than the mean.

Also, as Oremus discusses in the article―read the whole thing―the "average" wedding costs were taken from two surveys whose participants were not randomly selected, so it's possible that the samples were biased towards wealthy people who spend more on weddings than most people.

Source: Will Oremus, "The Wedding Industry’s Pricey Little Secret", Slate, 6/12/2013

Resources:

- "Average" Ambiguity, 11/4/2002

- How to Read a Poll

Fallacy: Biased Sample

June 10th, 2013 (Permalink)

Check it Out

- (6/12/2013) Another couple of Slate articles worth noting concern Robert F. Kennedy, Jr.'s vaccination conspiracy theories (see the Sources, below). According to Phil Plait:

RFK Jr. has a long history of adhering to crackpot ideas about vaccines, mostly in the form of the now thoroughly disproven link to autism. … I’ll note former Rep. Dan Burton (R-Ind.)…has long advocated anti-vax quackery, even participating in shameful hearings in Congress about it. It goes to show you that some anti-science knows no partisan bounds; it’s hard to imagine many other issues the conservative congressman and Kennedy would agree on. That’s an important point. A lot of anti-science tends to be a wholly owned subsidiary of the Republican Party: climate-change denial, evolution denial, stem-cell research, anything going against the more fundamental tenets of religion. Some of these are actually incorporated into the party’s planks. The Democrats are more diverse (and haven’t embraced their own flavors of anti-science in the party planks), but progressives do cleave to some anti-science, most broadly things like being against GMO foods and supporting “alternative” medicine. This is peculiar to me; I’d think a priori most progressives would be more likely to embrace science, but in these specific cases, at least, they’ve abandoned it. I find that disappointing. I think of myself as a progressive in many ways, though I take things on a case-by-case basis. By definition a progressive wants to move forward, to look to the future, rather than look back to a semi-mythical “good ol’ days.” That progress must include embracing science, the evidence behind it, and the methodology that leads to a more accurate understanding.

Sources:

- Laura Helmuth, "So Robert F. Kennedy Jr. Called Us to Complain…", Slate, 6/11/2013

- Phil Plait, "Robert F. Kennedy Jr.: Anti-Vaxxer", Slate, 6/5/2013

Resource: Check it Out, 2/18/2013

- Because of all the current forensic science television police shows, people seem to have an exaggerated idea of what can be done with DNA evidence. Fortunately, mathematician Jordan Ellenberg's latest Slate article helps explain why the reality is not as simple as what you see on TV:

It’s nice to imagine a world in which cracking a case means grabbing a fabric swatch from the crime scene, scanning it with the help of CheekSwab.gov, and then getting a report with the criminal’s name, address, photo, and last 10 tweets. But it’s not going to be that easy. Simple example: You get DNA from a hair found at the scene of the crime and find six usable places in the genome to test. The chance that any given person is a genetic match at those six places is pretty small, say 1 in 5 million. Now you run the sample through your database and you’re a happy detective because you find just one match. We got him! And when you try the case, the number “1 in 5 million” is going to be front and center. When the DA rips open his dress shirt at the culminating moment of his closing statement, “1 in 5 million” is what’s printed on the tank top underneath. That’s how I imagine it, anyway. But that number, impressive as it is, isn’t the right one.

To find out what the right number is, you'll just have to read the whole thing. Ellenberg is dealing here with the so-called "prosecutor's fallacy", though not under that misleading name. He also mentions the new book Math on Trial, which I pointed to a couple of months ago (see the Resource, below).

Source: Jordan Ellenberg, "Doubt and the Double Helix", Slate, 6/5/2013

Resources:

- The Prosecutor's Fallacy, 10/30/2006

- New Book: Math on Trial, 4/3/2013

June 3rd, 2013 (Permalink)

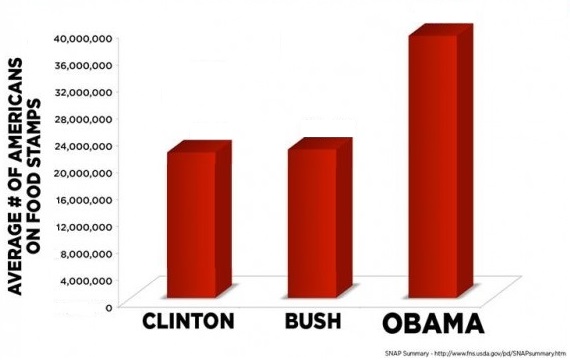

Charts & Graphs: The 3D Bar Chart, Part 1

Get out your red-and-blue tinted glasses, it's time for the next installment of our series on how to mislead people with graphs. Last time, we looked at how pie charts in which the "pie" has a three-dimensional appearance, instead of being a simple circle, can give a false impression of the relative sizes of its "slices". Something similar can happen when an extra dimension is added to a bar chart, though you won't actually need any special glasses to see such a graph because, as with the pie type, perspective is added to the chart to create the 3D effect. Usually, the extra dimension adds no additional information―it's just there to make the chart more visually appealing―but the added dimension can sometimes distort the information contained in the chart. Of course, this may be the result of careless or ignorant chartmaking, but sometimes it may be intentional distortion.

There are two ways that this kind of chart may be misleading; in this installment we'll look at the first, and the second type will be the subject of our next entry in the series. In some 3D bar charts, the perspective is such that you seem to look down upon the bars from above so that you can see their tops. More technically, the vanishing point or points on the horizon in the graph are above the tops of the bars.

For an example, see the chart above and to the right. This is a revision of a misleading chart that lacked a zero baseline (see Source 1 & Previous Entry 2, below), which was a more misleading fault, but the revision retains the overhead perspective of the original. If you try to gauge the number of food stamp recipients under Obama from the chart using the naked eye, it will probably appear to be in excess of 40 million, the top number in scale. The actual number from the original chart is slighly less that 40 million, but the fact that the back of the bar extends above the 40 million mark makes it difficult to visually judge the height of the bar. So, at best, a 3D bar graph with an overhead perspective makes it difficult to accurately judge the heights of its bars; at worst, it can lead to an exaggerated notion of the size of some of the bars, which seems to have been the intention of the original version of this chart.

As is true of all the types of charts and graphs in this series, there's no hard-and-fast rule against using perspective in bar charts to make them more attractive. Rather, both as maker and as consumer of bar charts, you should keep in mind the potential for distortion that is introduced into a chart by adding a third dimension, and make allowances to avoid that―unless, of course, your goal is to mislead people, which I don't recommend.

Sources:

- Charts and Graphs, 6/4/2012

- Gerald E. Jones, How to Lie with Charts (2000), pp. 78-80

Previous Entries in this Series:

- The Gee-Whiz Line Graph, 3/21/2013

- The Gee-Whiz Bar Graph, 4/4/2013

- Three-Dimensional Pie, 5/5/2013

Next Entry in this Series: The 3D Bar Chart, Part 2

Did you think the answer was:

- "b, a, c, k, w, a, r, d, s"? Wrong! You must be illiterate and, therefore, ineligible to vote.

- "s, d, r, a, w, r, o, f"? Wrong! You must be illiterate and, therefore, ineligible to vote.

- "b, a, c, k, w, a, r, d, s, f, o, r, w, a, r, d, s"? Wrong! You must be illiterate and, therefore, ineligible to vote.

So, what is the answer? It's a trick question. The question is ambiguous, and any one of the above three answers could be plausibly said to be the intended answer. As a result, any answer that you choose can be plausibly said to be the wrong one.

According to an article in Slate by Rebecca Onion (see the Source, below), the question is taken from a literacy test given in the 1960s by the state of Louisiana to prospective voters who could not "prove a fifth grade education". The test contained thirty questions, had to be answered in ten minutes, and a single incorrect answer was considered failure. Failing the test would lead to the test-taker being barred from voting.

Now, many of the questions on the test are straightforward, could be easily answered by any person who understood them, and could therefore be considered legitimate tests of English literacy. Consider, for instance, question 3: "Cross out the longest word in this line." However, with thirty questions and a ten minute time limit, a test-taker would have to answer three questions a minute in order to complete the test on time. If all thirty questions had been like question 3, this might have been possible. However, in addition to trick questions, there are a few difficult ones, for instance, one that threw me when I took the test was question 24: "Print a word that looks the same whether it is printed frontwards or backwards." The question itself is clear: it's asking for a word that is a palindrome, but many literate people may not be able to think of such a word within the time limit―I know that I couldn't, and would therefore have flunked the test! It's easier to think of famous phrases that are palindromes rather than single words, for instance: "A man, a plan, a canal―Panama!" But no single word in that phrase is itself a palindrome. Now, if I'd happened to think of another such phrase, the alleged first words of Adam to Eve: "Madam, I'm Adam", then I could have realized that "Madam" is a palindrome. But I didn't.

In addition to the "backwards, forwards" trick question, there are a few other problematic questions on the test. For instance, question 25 is based on a famous "optical illusion", and many people not familiar with it, or in a hurry to finish the test, would leave out the second "the", writing: "Paris in the spring." Such a mistake would have nothing to do with illiteracy; in fact, a literate person who reads the entire sentence at a glance would be much more likely to make this mistake than a semi-literate person who has to work through it word-by-word.

Another trick question on the test is question 27: "Write right from the left to the right as you see it spelled here." This could be asking you to write at least four different things:

- "right": This interpretation doesn't make much sense, as how else are you going to write it other than "from the left to the right", and how else are you going to spell it than "as you see it spelled here"? Those don't make much sense as part of the instructions since they go without saying.

- "right from the left": Presumably, you would write this to the right of the question. Again, how else are you going to spell it?

- "right from the left to the right": Once again, how else are you going to spell it? Also, though "from the left to the right" doesn't make much sense as part of the instructions, it makes even less sense as part of what you're supposed to write.

- "right from the left to the right as you see it spelled here": Most of this makes more sense as part of the instructions than as what you're supposed to write.

According to Onion, these ambiguous questions were used as a way of preventing blacks from passing the literacy test and being able to vote. No matter how these questions were answered, the person grading the test could count any answer to one of the trick questions as incorrect, thus causing the test-taker to flunk the test.

We've seen a couple of puzzles previously using this type of ambiguity, which is based on what logicians call "the use/mention distinction" (see the Resources, below, for the puzzles and an explanation of the distinction). The ambiguity could be resolved by using either quotation marks or italics to indicate when a word or phrase is being mentioned as opposed to used. For instance, the puzzle question could have been punctuated as follows:

Spell "backwards", forwards.

It would have then been clear that the correct answer to the question was number 1, above. Similarly, question 27 could have been written in this way:

Write right from the left to the right as you see it spelled here.

Again, it would be obvious that the correct answer is the first one, above. The question still wouldn't make much sense, but at least it wouldn't be ambiguous.

Source: Rebecca Onion, "Take the Impossible 'Literacy' Test Louisiana Gave Black Voters in the 1960s", Slate, 6/28/2013

Resources:

- Puzzle Number 1, 2/22/2009

- Puzzle Number 2, 2/23/2009

Update (7/3/2013): There seems to be some doubt about the historical provenance of this test. The only copies available are second- or third-hand, whereas if the test were used by the state of Louisiana it ought to be possible to find an official copy. Onion is searching for such a copy (see the Source, below), but in the meantime the claim that this particular test was used in Louisiana to prevent black people from voting should be treated as an unproven allegation.

Source: Rebecca Onion, "Update: On the Hunt for the Original Louisiana Literacy Test", Slate, 7/3/2013